Throughout 2025, frontier AI capabilities advanced rapidly. But the same capabilities that make these systems so useful also introduce new societal risks. Real-world evidence for several of these risks continues to grow—across malicious use, malfunctions, and systemic threats.

Against this backdrop, Concordia AI’s mission remains as critical as ever: ensuring that AI is developed and deployed safely and in alignment with global interests. We advance this mission through research, advisory work with leading AI companies and policymakers, and promotion of international dialogue.

Below are some of our key accomplishments in 2025. We’ve organized this list according to key axes of our work: international convenings, international research and public engagement, and contributing to China’s domestic AI safety and governance landscape. We end with organizational updates. For previous highlights, see our 2023, 2024 and mid-2025 reports.

International convenings

- Convening international AI safety dialogues in China, Singapore, and globally

- Hosted the AI Safety and Governance Forum at the World AI Conference (WAIC). This was Concordia AI’s flagship convening of 2025—bringing together around 30 distinguished experts from around the world, including Turing Award winner Yoshua Bengio; United Nations Under-Secretary-General Amandeep Singh Gill; Shanghai AI Lab Director ZHOU Bowen (周伯文); Special Envoy of the President of France for AI Anne Bouverot; Distinguished Professor of computer science at UC Berkeley Stuart Russell; Peng Cheng Laboratory Director GAO Wen (高文). We had 200+ in-person attendees and 14,000+ livestream views. The Forum was covered my multiple media outlets, including Bloomberg, Wired, Caixin, IT Times, and Tech Review Africa. We also co-hosted/hosted multiple side events and expert workshops and served as official AI Governance Advisor for WAIC 2025.

- Co-hosted two international workshops with the Carnegie Endowment for International Peace, the Oxford Martin School AI Governance Initiative, the Oxford China Policy Lab, Tsinghua University Center for International Security and Strategy (CISS), and Tsinghua University Institute for AI International Governance (I-AIIG). The first workshop focused on “AI Safety as a Collective Challenge”, and was held at the French AI Action Summit in January. The second focused on “Early Warning and Crisis Coordination for Advanced AI” and was held at the World AI Conference in Shanghai in July.1

- Co-hosted the AI Safety Forum at the Beijing Academy of AI Conference 2025 where technical experts from institutions including MIT, Fudan University, Singapore Management University, and Tsinghua University worked to build consensus on AI red lines.

- Organised an AI Risk Management Workshop on the sidelines of Asia Tech x Singapore (May 2025) with 20+ experts in AI safety — spanning policy, industry, AI assurance and academia — with participants based across Singapore, China, the US, UK, and the EU, with the support of the Infocomm Media Development Authority of Singapore (IMDA).

- Co-hosted a “Frontier AI in Cybersecurity” workshop with Nanyang Technological University CyberSG R&D Programme Office and UC Berkeley RDI which brought together 25 leaders across government, law enforcement agencies, leading AI labs; and organised the AI Governance in Singapore panel at Lorong AI, on the sidelines of the Singapore International Cybersecurity Week 2025.

- Co-hosted events at the International Conference on Learning Representations (ICLR) 2025 Singapore: a “Frontier Governance Exchange” with Singapore AI Safety Hub, Lorong AI and Safe AI Forum; an AI safety social attended by 130+ participants and a “Misalignment and Control” workshop with FAR.AI and Singapore AI Safety Hub.

- Contributing to and participating in global and multilateral AI governance efforts

- Concordia AI CEO Brian TSE (谢旻希) participated in the International AI Standards Summit (Seoul, Dec 2–3) and spoke on a panel, as part of the expert delegation recommended by the National Standardization Administration of China.

- Brian Tse was invited as a Chinese civil society representative to the French AI Action Summit in the Grand Palais. Invited to a closed-door seminar hosted by the China AI Safety & Development Association (CnAISDA).

- Provided written inputs to UN consultations regarding the Independent International Scientific Panel on AI and Global Dialogue on AI.

- Spoke on the panel “From Principles to Practice—Governing Advanced AI in Action” at the AI for Good Summit 2025.

- Participated in the International Dialogues on AI Safety (Shanghai) and signed the Shanghai Consensus on “Ensuring Alignment and Human Control of Advanced AI Systems to Safeguard Human Flourishing”.

- Global AIxBiosecurity governance: We contributed to a number of critical global discussions at the intersection of AI and biosecurity:

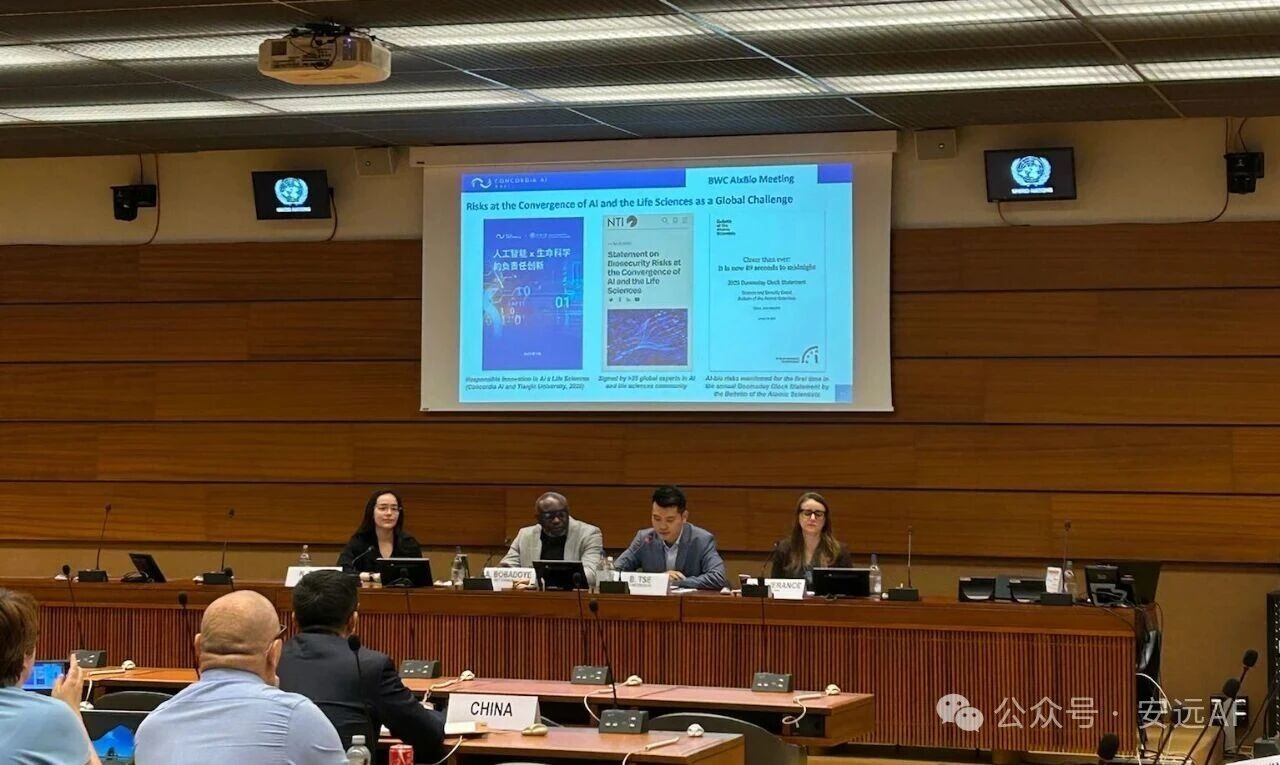

- Brian Tse signed the Statement on Biosecurity Risks at the Convergence of AI and the Life Sciences along with figures such as Andrew Yao, Yoshua Bengio, and George Church, and presented the Statement during The Sixth Session of the Working Group on the Strengthening of the Biological Weapons Convention, as part of the Global AIxBio Global Forum.

- Participated in an AIxBio tabletop exercise at the Munich Security Conference hosted by the Nuclear Threat Initiative, which led to the publication of the report “Safeguarding Against Global Catastrophe: Risks, Opportunities, and Governance Options at the Intersection of Artificial Intelligence and Biology.”

- Presented at a WHO dialogue on AIxBio implications for the Technical Advisory Group on the Responsible Use of the Life Sciences and Dual-Use Research.

- Co-developed and endorsed the “Recommendations to Governments on Mitigating AIxBio Risks” as part of the INHR/CNAS trilateral dialogue.

- Participated in roundtables on CBRN (chemical, biological, radiological, and nuclear) risks and on responsible innovation in AI for peace and security, hosted by the Stockholm International Peace Research Institute and the United Nations Office for Disarmament Affairs (UNODA).

- Participated in a workshop “International Standards for DNA Synthesis Screening – Towards Common Global Standards for Biosecurity” organised by the International Biosecurity and Biosafety Initiative for Science (IBBIS). The meeting marked the launch of the IBBIS International Standards Initiative.

- Spoke on a panel “AI-Accelerated Biological Risk: Delving into Asia’s Challenges and Emerging Solutions,” organized by AI Safety Asia (AISA)

Concordia AI CEO Brian Tse speaking at the United Nations side event during the Sixth Expert Meeting of the Working Group on strengthening implementation of the Biological Weapons Convention (BWC) in Geneva. Source: Concordia AI. International research and public engagement

- Analysis of China’s AI safety and governance landscape

- Published the State of AI Safety in China 2025 report, which was cited by Wired, Bloomberg, and People’s Daily; discussed the findings in a webinar with distinguished experts; gave briefings on the report to senior leadership at over ten global organisations.

- Our analysis was featured in multiple Nature News stories, including on China’s proposal for a World Artificial Intelligence Cooperation Organization (WAICO), on DeepSeek’s CEO LIANG Wenfeng (梁文锋), and on China’s domestic AI governance.

- Published 20 “AI Safety in China” newsletters, growing our subscriber base by 73% over the course of 2025.

- Brian Tse appeared on Nathan Labenz’ The Cognitive Revolution podcast to discuss China’s approach to AI development, safety, and governance and CGTN on China’s approaches in AI innovation and global governance.

- Our International AI Governance Senior Research Manager Jason ZHOU (周杰晟) and our International AI Governance Part-time Researcher Gabriel Wagner analysed the AI safety implications of China’s April Politburo study session in a piece for the Stanford DigiChina Forum.

- Brian Tse authored an op-ed in Time Magazine, suggesting practical steps for AI safety dialogue between China and the US.

- Kwan Yee NG (吴君仪) and Gabriel Wagner spoke on China’s AI safety approach at AI Safety Asia’s Beijing Roundtable.

- Analysis of China’s AI safety and governance landscape

- Contributing to international AI safety and governance research

- Kwan Yee Ng contributed to the first International AI Safety Report in 2025 and the second edition in 2026 as a writer. The Report provides an up-to-date, internationally shared and science-based understanding of Alcapabilities and risks. It was overseen by an international Expert Advisory Panel nominated by over 30 countries and intergovernmental organisations.

- Contributed to the Singapore Consensus on Global AI Safety Research Priorities, alongside 100 researchers from 11 countries.

- Co-published the report Examining AI Safety as a Global Public Good alongside the Carnegie Endowment for International Peace and the Oxford Martin School AI Governance Initiative.

- Team members contributed to major papers on autonomous and agentic AI risks: Brian Tse and our AI Safety Research Manager DUAN Yawen (段雅文) contributed to “AI Deception: Risks, Dynamics, and Controls” (Peking University), and Duan Yawen worked on the World Economic Forum white paper “AI Agents in Action: Foundations for Evaluation and Governance”, 2025 AI Agent Index led by MIT, and the research paper “Bare Minimum Mitigations for Autonomous AI Development.”

- Singapore-related research

- Published the State of AI Safety in Singapore report, the first comprehensive analysis of Singapore’s AI safety ecosystem, led by our International AI Governance Project Manager Jonathan Lee. He also presented the report at a AI governance in Singapore panel organised by Concordia AI in Singapore, a talk organised by the Singapore AI Safety Hub, and at EAGxSingapore.

Contributing to China’s domestic AI safety and governance landscape

- Frontier AI safety risk management and best practices:

- Co-published the Frontier AI Risk Management Framework v1.0 with Shanghai AI Lab. This is China’s first comprehensive framework for managing severe risks from general-purpose AI models.

- The framework proposes a robust set of protocols designed to support general-purpose AI developers, with comprehensive guidelines for proactively identifying, assessing, mitigating, and governing a set of severe AI risks that pose threats to public safety and national security.

- The framework outlines a set of unacceptable hazards (red lines) and early warning indicators for escalating safety and security measures (yellow lines) for areas including: cyber offense, biological threats, large-scale persuasion and harmful manipulation, and loss of control risks.

- The framework was cited in various media outlets, including Caixin, IT Times, Xinhua, TIME, Sina, and Sinica Podcast.

- Signed strategic partnership agreements with several leading Chinese general-purpose AI developers to provide advice on AI safety and risk management best practices.

- Provided comprehensive advice on compliance with the EU AI Act and General-Purpose AI Code of Practice to leading Chinese general-purpose AI developers. This work included co-hosting a workshop on “EU Code of Practice & Industry Best Practices: Towards a Global Standard for AI Risk Management, Safety and Security” with SaferAI, the Oxford Martin AI Governance Initiative, and the Safe AI Forum.

- Presented on frontier AI risk management during a closed-door workshop at the China AI Industry Alliance’s 15th Plenum Meeting, in the context of its Disclosure of Practices on the AI Security and Safety Commitments.

- Presented on risk management for open-weight frontier models at a workshop (“Academic Symposium on AI Industry Development and Legislation”) at Tongji University.

- Co-published the Frontier AI Risk Management Framework v1.0 with Shanghai AI Lab. This is China’s first comprehensive framework for managing severe risks from general-purpose AI models.

-scaled-e1772778132808.jpg)

- Frontier AI risk monitoring and evaluation:

- Contributed to the “Frontier AI Risk Management Framework in Practice: A Risk Analysis Technical Report” led by Shanghai AI Lab. We assessed critical risks from more than 20 frontier LLMs in the following areas: cyber offense, biological and chemical risks, persuasion and manipulation, uncontrolled autonomous AI R&D, strategic deception and scheming, self-replication, and collusion. The report was covered by Jack Clark’s Import AI.

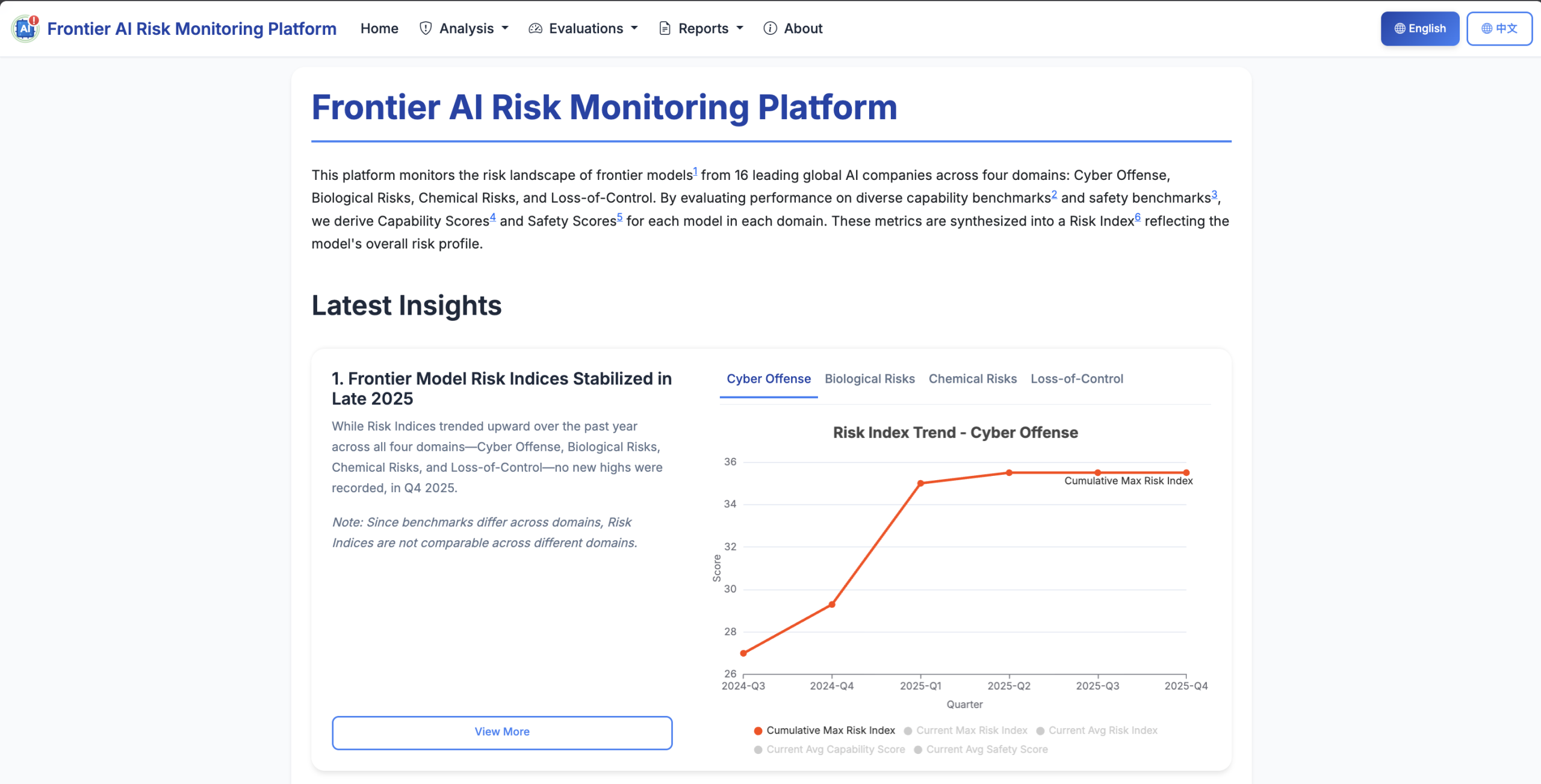

- Launched the AI Risk Monitoring Platform designed to track and mitigate frontier AI risks, including cyberoffense, biological threats, chemical threats, and loss of control domains. The platform evaluates 50 frontier LLMs from 15 leading developers across the US, China, and France, using 18 open source benchmarks. Key outputs include a risk index dashboard and a detailed technical report. This project was spearheaded by our AI Safety Research Senior Manager WANG Weibing (王伟冰).

- The platform received coverage from several major media outlets, including People’s Daily, South China Morning Post, Xinhua’s Economic Information Daily, and IT Times.

- National standards and policy guidance: Concordia AI is a member of key national and industry technical committees, contributing to the development of China’s AI safety standards.

- National Information Security Standardization Technical Committee (SAC/TC260): As part of SAC/TC260 Special Working Group on Emerging Technology Safety, Concordia AI contributed to the standard for “Classification and Grading Methods for the Security of Artificial Intelligence Applications.”

- National Information Technology Standardization Technical Committee (SAC/TC28/SC42): As a member of the AI Subcommittee, Concordia AI contributed to “Artificial intelligence—Risk management capability assessment.”

- Ministry of Industry and Information Technology AI Standardization Committee (MIIT/TC1): Concordia AI joined the Working Group on AI Safety Governance.

- Guangdong-Hong Kong-Macao Greater Bay Area local standards: As a member of the Greater Bay Area working group of SAC/TC28/SC42, Concordia AI played a key role in the development of the Shenzhen local standard “Technical Framework for Value Alignment of Pre-trained AI Models.”

- AIxBiosecurity Governance:

- Published a Chinese language report “Responsible Innovation in AI x Life Sciences” with Tianjin University’s Center for Biosafety Research and Strategy. The 70-page report draws on more than 300 sources to explore AI-biotech convergence, and its benefits, risks, and governance recommendations for diverse stakeholders.

- Head of AI Safety and Governance (China) FANG Liang (方亮) presented the report at a biosecurity seminar hosted by China’s National Key Laboratory of Synthetic Biotechnology.

- Presented the report at the 2025 International Symposium on Global Biosecurity Governance and Cooperation, co-hosted by the National Biosecurity Expert Committee of China, Guangzhou Laboratory, and China Foreign Affairs University.

- Presented the report at the “Symposium on Trends and Development Strategies for the Integration of Biotechnology and AI” hosted by the China National Center for Bioinformation.

- Participated in the “Closed-door Seminar on DNA Synthesis Screening Technology and Policy” held at China Foreign Affairs University.

- Published a Chinese language report “Responsible Innovation in AI x Life Sciences” with Tianjin University’s Center for Biosafety Research and Strategy. The 70-page report draws on more than 300 sources to explore AI-biotech convergence, and its benefits, risks, and governance recommendations for diverse stakeholders.

- WeChat publications:

- Published over 80 new posts in our WeChat Official Account, reaching over 4,900 subscribers across China’s AI ecosystem, including policymakers, industry professionals, and academic researchers.

- The articles provide Chinese stakeholders with updates on key global AI safety and governance developments. Highlights include a series of articles on frontier AI safety frameworks by our AI Safety and Governance Senior Manager CHENG Yuan (程远); legal explainers on the EU General-Purpose AI Code of Practice and California SB-53; and overviews of technical AI safety research on topics like deception and self-replication risks.

Organizational updates

- We established our Singapore office, welcoming our first full-time staff based in Singapore. Singapore’s status as a global hub and international convenor allowed Concordia AI to convene stakeholders from China, the US, and Southeast Asia, significantly expanding our capacity to shape international discussions on AI governance.

- We expanded the team from eight to twelve members in 2025, and will soon grow to 18 staff.

- We recruited our seventh cohort of 34 affiliates, who supported both China-focused and international workstreams.

- We launched a new website with refreshed branding, and updated our brochure and Chinese materials.

- We became a formal member of the Partnership on AI and the International Association of Safe and Ethical AI affiliate program.

Afternote: We co-organised the “AI Crisis Management” workshop at the sidelines of the Munich Security Conference in February 2026, as a continuation of this workshop series.